OLYA

designing trust in AI-mediated diagnosis

CONTEXT

What's olya?

Olya is a concept for completing a full mental health evaluation from home. Instead of waiting months for appointments or relying on questionable online quizzes, people go through a guided VR assessment. AI processes the results, generates a readable report, and a provider reviews the outcome. The goal was simple: make evaluation feel accessible, credible, and less intimidating.

Why did we make it?

People delay mental health care. They’re unsure what symptoms mean, afraid of being misdiagnosed, or blocked by cost and access. When they finally get evaluated, they often walk away with paperwork they don’t understand and no clear next steps. We wanted to design something that removes friction before, during, and after evaluation.

What I did.

I worked with one other designer, Macks Brooks. He focused on the VR headset demo experience and brand direction. I built the product interface and prototype. I designed the main flows from booking an appointment to viewing results. I also explored how the AI system would function: how it gathers assessment data, produces reports, explains outcomes, and guides people toward next steps. My focus was making the experience feel clear, calm, and trustworthy, especially after results are delivered.

How did we get here? Research.

We started wide. Before jumping into mental health, we looked at broader problems in healthcare: access, cost, confusion, and the emotional weight of navigating medical systems. We talked to people about their general experiences with seeking care. What came up again and again was hesitation. People wait. They second-guess. They search the internet instead of seeing a professional.

That led us to mental health. The barriers were sharper, the stakes were higher, and the uncertainty was constant. From there, we studied how mental health evaluations actually work: the clinical steps, diagnostic methods, and provider workflows. We didn’t want to “disrupt” the process blindly. We wanted to respect and understand it, and design around it instead of against it.

Alongside this, we researched trust in digital health experiences. What makes something feel legitimate instead of sketchy. What visual and interaction choices signal credibility, care, and safety.

Solution

Let's break the design down real quick.

Traditional clinical assessments demand vulnerability with strangers in unfamiliar settings. But introducing AI raises new questions: How do you design an AI system people will trust with their mental health? What does trustworthy AI actually look like?

Part 1: The Exam.

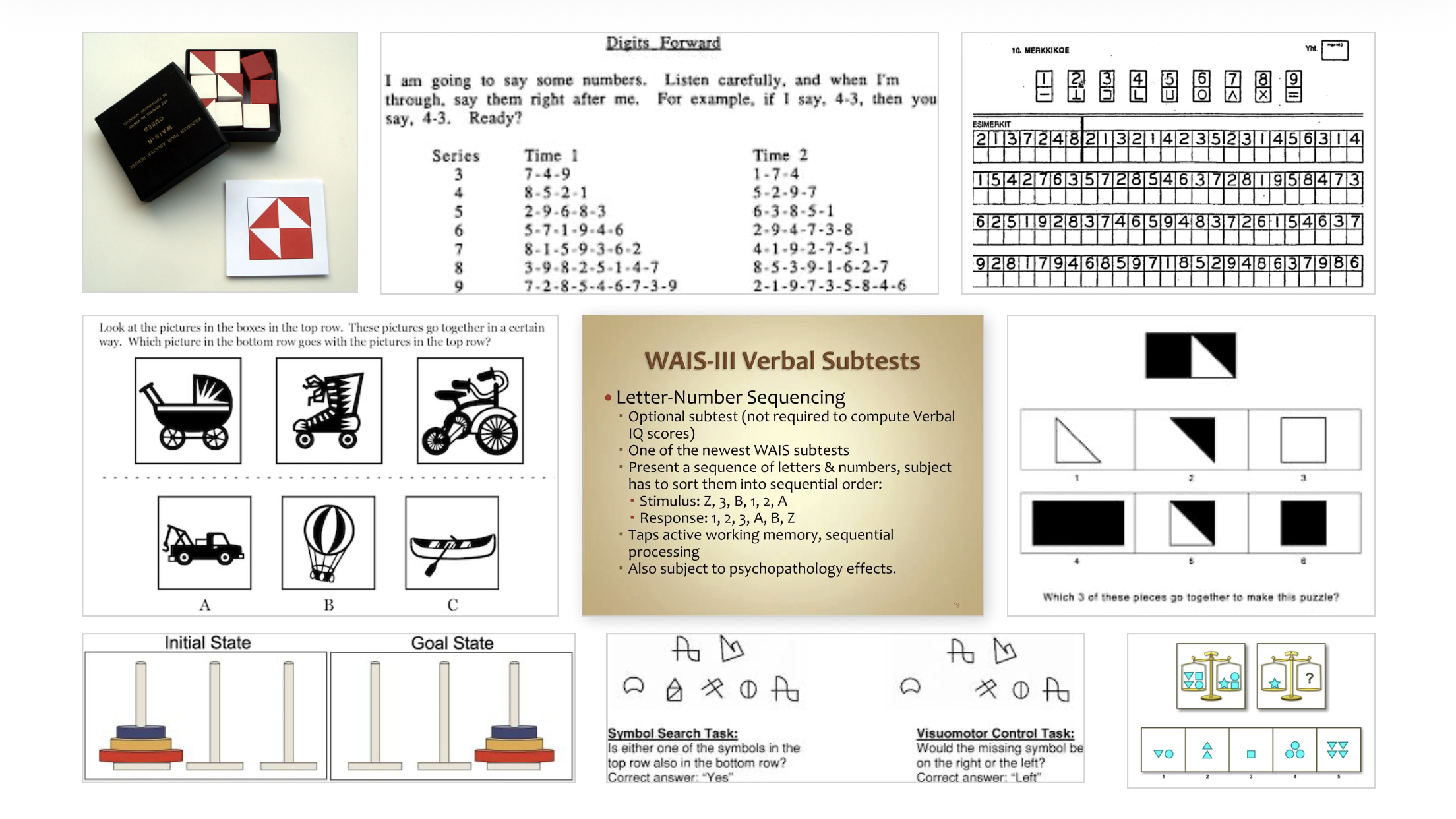

Patients receive prescription for a headset at home with simple instructions built in. Inside, they complete a guided mental health evaluation based on established clinical exams, including WAIS-style cognitive assessments. The test includes puzzles, activities, and structured questions designed to mirror real diagnostic processes.

Everything happens in their own space, on their own time. When they’re finished, they pack the headset up and ship it back.

Part 2: The Report.

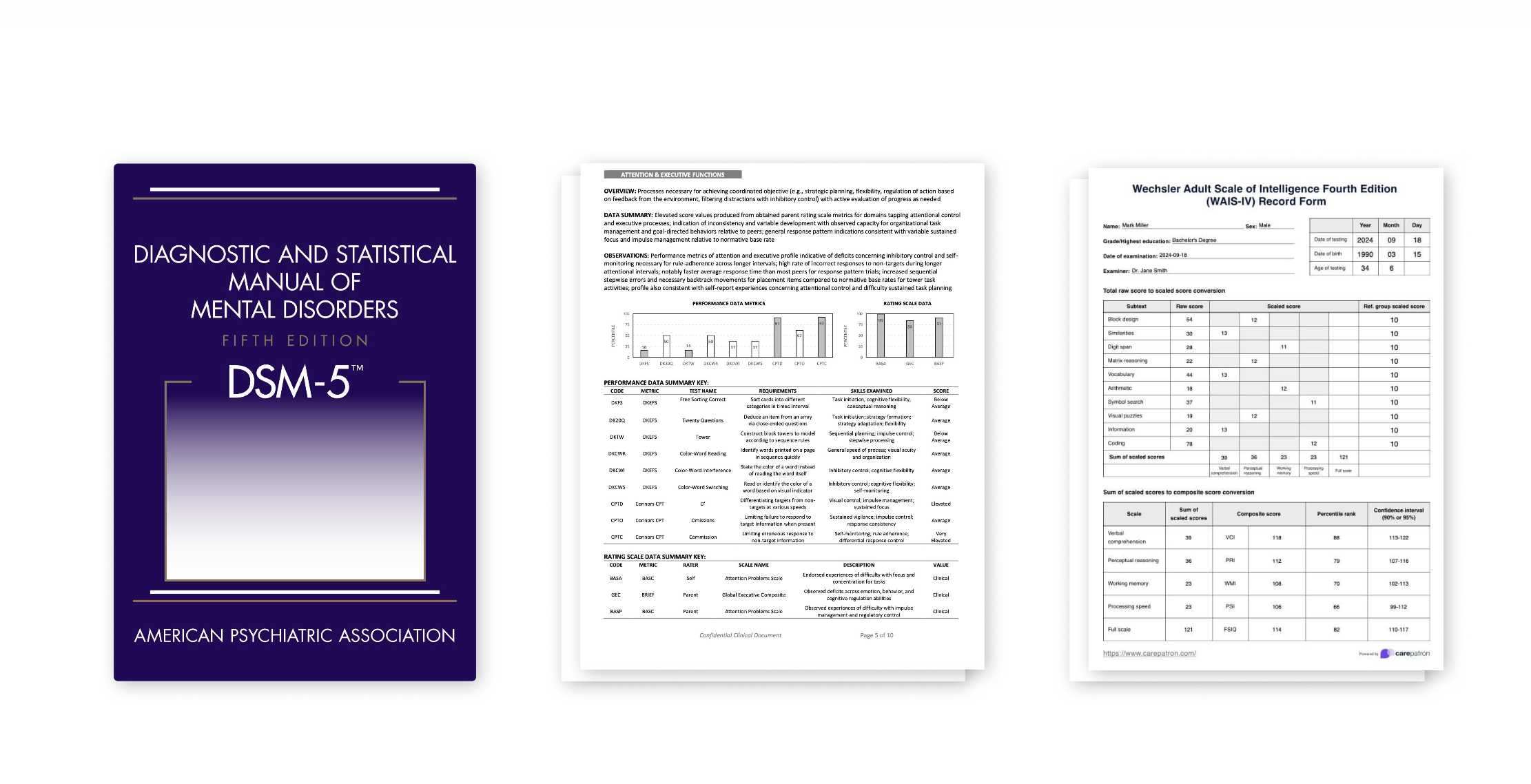

After the exam, neuropsychologists review the data. An AI system trained on DSM-5 criteria, WAIS frameworks, and past evaluation patterns assists by organizing findings and drafting report language, not by making diagnoses on its own. A licensed provider signs off on every outcome.

The final report appears in the patient’s MyChart health portal with clear explanations, recommended treatments, and relevant context. The AI also translates clinical language into plain language, answers quick questions, and helps people understand what they’re reading.

Outcome

Reflecting and feeling proud.

We delivered a complete concept: a VR-based exam experience, an AI-assisted reporting system, and a working interactive prototype showing how patients move from uncertainty to clarity. More than designing an assessment, the project focused on the moment after evaluation, where most systems leave people confused. Olya reframes that moment into one of understanding and direction.

The next step would be testing whether this approach actually improves comprehension, trust, and follow-through. But as a design exploration, it establishes a realistic, respectful model for how emerging technologies like VR and AI could support mental health care without replacing the people at its center.